The birth of a storyteller

OMEA - why, where, what, when

Next week, we’re opening the doors.

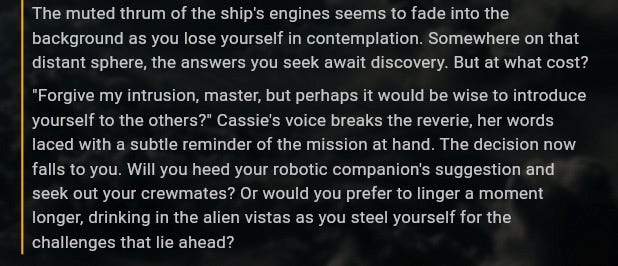

Omea’s first public demo goes live. Free access. One story. Anyone can play. No gatekeeping, no waitlists, no “apply for early access and we’ll get back to you in 6-8 weeks”. You show up, you play.

I’m terrified.

Not the impostor syndrome kind of terrified - I’ve written about that enough times that it’s become a familiar companion, like a roommate who never does the dishes but at least pays rent on time. This is a different flavor. This is the terror of showing people something you’ve poured everything into and watching them interact with it for the first time. Like handing your diary to a stranger and saying “so, what do you think?”

So many things can go wrong. The servers might buckle under traffic (I’m betting they will). Edge cases we didn’t catch will surface within the first hour (they always do). Someone will find a way to break the narrative in a way we never anticipated (looking forward to that one, actually - players are magnificently creative destroyers).

But before we get to next week, I want to tell you how we got here. Because the story of Omea starts with a question asked over drinks after a long day of teaching people about technology they didn’t yet realize would change their lives.

The question that started everything

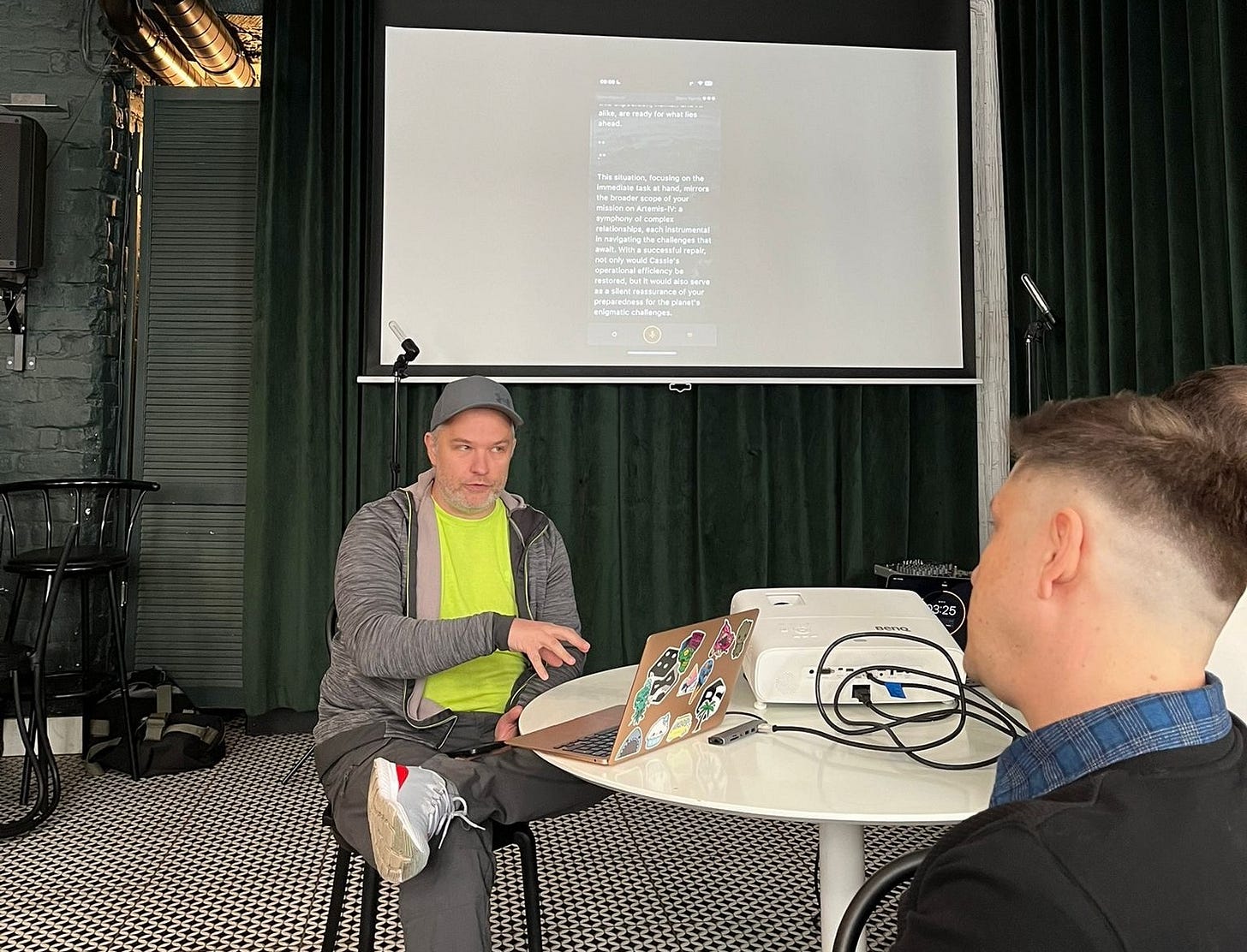

2023. I’d just wrapped up a full day of corporate training - back when teaching people about large language models was still a niche thing, before it became the default LinkedIn personality trait. Artur Kurasiński and I were decompressing. If you don’t know Artur, he’s my co-founder and CMO at Omea now, but back then he was just my friend. A fellow nerd. A fellow traveler in the same weird corner of geekdom that I’ve inhabited since I was young.

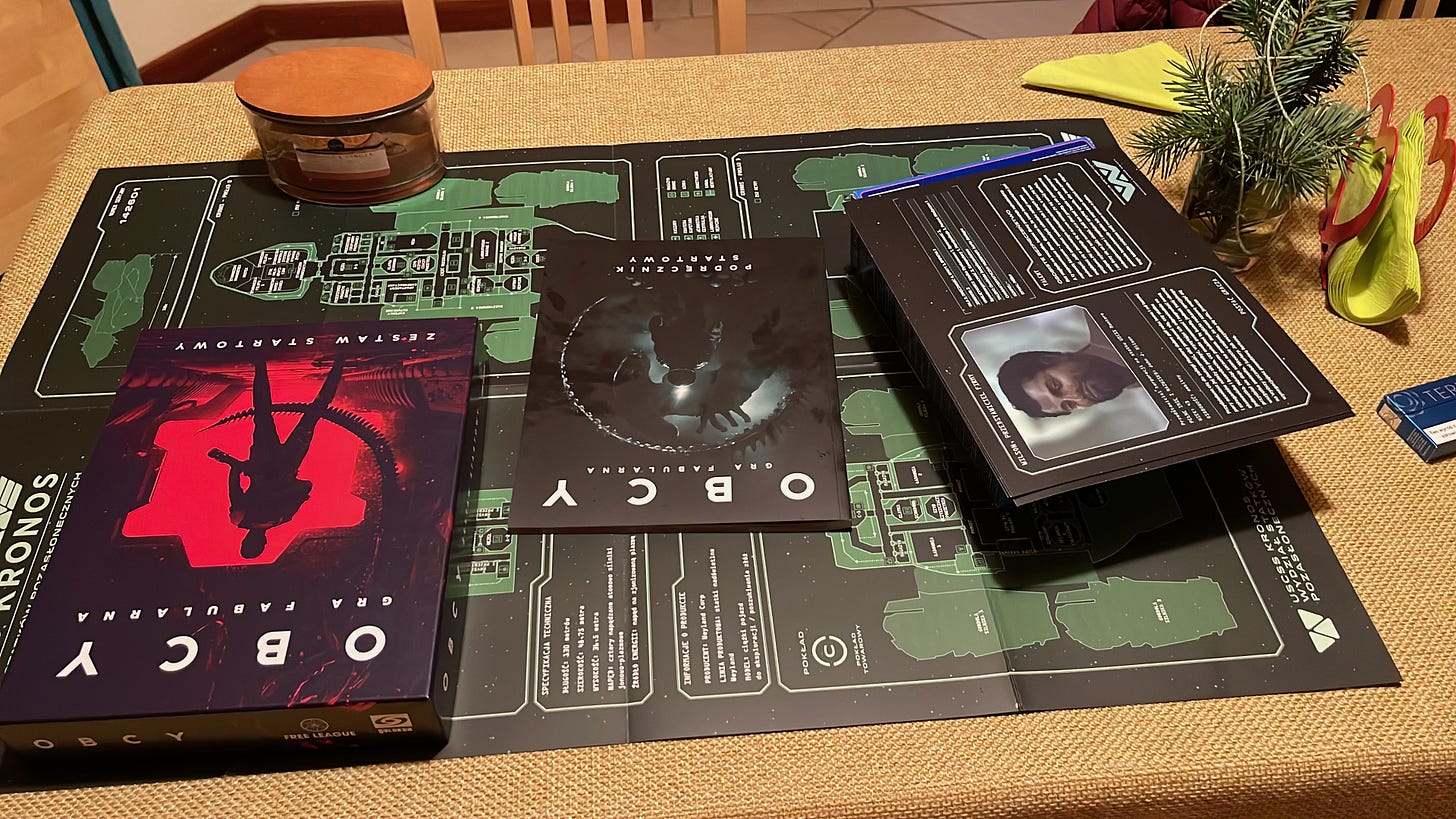

We’re both pen-and-paper RPG players. Have been for decades. And before I go further, let me explain what that means for anyone who hasn’t experienced it, because it’s central to everything Omea is trying to do.

Pen-and-paper RPGs - think Dungeons & Dragons, but that’s just the most famous one - are collaborative storytelling games. A group of people sit around a table. One person is the Game Master (GM). The GM creates the world, controls the non-player characters, describes the environment, manages the plot. Everyone else plays a character in that world - makes choices, interacts with the story, pushes the narrative in directions nobody predicted.

The GM is part writer, part improv actor, part psychologist, part god. They hold the entire narrative in their head. They know the backstory, the plot threads, the emotional arcs. But here’s the crucial thing: they can’t script what happens. The players have free will. Someone will always do something unexpected. The GM’s job is to adapt, in real time, while maintaining coherence and emotional impact.

When a great GM is running a session, something magical happens. You stop thinking about rules and dice and character sheets. You’re in the story. You feel genuine tension during a heist. You care about NPCs who only exist in someone’s imagination. You remember sessions from years ago like they actually happened to you.

That’s what Artur asked me about.

“When will LLMs be powerful enough to be a Game Master? Not a chatbot that feels like a game of Mad Libs - an actual storyteller. Someone who builds narrative, paints believable characters, surprises you with fresh ideas instead of clichés.”

I thought about it for maybe five seconds.

“Nowhere soon. Years. Maybe a decade.”

How spectacularly wrong I was.

The three problems nobody was solving

Here’s the thing about being a tinkerer with ADHD (my superpower and my curse, as documented in a previous essay): when someone poses a question that interesting, my brain doesn’t file it away for later consideration. It latches on. That night, instead of doing the sensible thing and sleeping next to my better half, I started experimenting. Small stuff at first. Then bigger. Then I sold my car.

But I’m getting ahead of myself.

The more I dug into the problem of AI storytelling, the more I understood why nobody had cracked it. There were three fundamental issues, and all three were showstoppers.

The narrative coherence problem. Ask any frontier LLM to write you a paragraph of fiction. It’ll be good. Maybe even great. Ask it to write a few pages that hang together. Probably still works. Now ask it to maintain a coherent, emotionally satisfying narrative across hours of interactive play. Off the rails. Every time. The model forgets what it set up earlier. Plot threads dissolve. Characters lose their personalities. The Hero’s Journey - the fundamental narrative structure I wrote about a few weeks ago - gets abandoned for a cycle of “what should the player do next?” followed by whatever the model’s attention mechanism happens to prioritize.

LLMs are incredible at generating plausible next sentences. They’re terrible at holding a story.

There’s a deeper issue here, too. In the standard LLM paradigm, you have a “user” and an “assistant”. They take turns. Ping-pong. The user asks, the assistant responds. This architecture leaks into any storytelling attempt built on top of it. The player becomes a god-like figure issuing commands from above, and the AI becomes a dutiful servant trying to please. That’s not how stories work. In a great narrative, the player isn’t a user with admin privileges - they’re an actor inside the world. One of many. Subject to the same rules, the same consequences, the same narrative forces as everyone else. The moment you give the player god-mode, you kill the stakes. And without stakes, there’s no story.

The emotional intelligence problem. For a narrative to work - really work, the way it does when a great GM is running a session - the AI needs to understand emotional dynamics. Not just of the player, but of the characters it portrays. NPCs need to feel like people, not cardboard cutouts dispensing quest information. They need motivations, contradictions, fears. Here’s something fascinating I learned from Tomek Kolinko: the same emotional rules that govern believable characters also apply to locations and objects. A haunted mansion isn’t just a physical description - it has emotional weight, history, presence. Getting this right requires something deeper than what standard LLMs offer.

The memory problem. We wanted players to live stories that span hours, not minutes. Think about what that means in terms of context. You need to remember everything: what the player said in the first scene, the promise they made to an NPC three hours ago, the subtle shift in a character’s loyalty that happened because the player chose to lie about something seemingly insignificant. We’re talking about ten million tokens of coherent, structured memory. Not just a giant context window - you can’t just dump ten million tokens into a prompt and pray. And standard retrieval systems don’t cut it either. Simple indexing and cosine similarity - the backbone of most RAG systems - can’t grasp the intricate connections between emotions, timeline, and cause-and-effect chains. “The player was kind to the merchant in chapter two” and “the merchant’s daughter is now in danger in chapter seven” are semantically distant in embedding space but narratively inseparable. Memory for storytelling isn’t a database lookup. It’s emotional, temporal, interconnected. What matters to the story changes over time. What was trivial in act one becomes pivotal in act three. The memory system needs to understand that.

Three problems. Three reasons why every AI storytelling attempt before us felt like a party trick that got old after five minutes.

The roads not taken (and the one we did)

So how do you fix this?

The obvious answer in 2025 and 2026 is agents. Build a multi-agent system. One agent handles narrative planning. Another manages character consistency. A third handles memory retrieval. A fourth does emotional modeling. Then you realize you also need a sentiment analysis agent. And a writing style consistency agent. And a coherency-in-time judge that tracks cause-and-effect chains. And a continuity checker. And... you see where this goes. You can add agents endlessly, each one solving a real problem, each one adding another layer of complexity to the stack. Coordinate them all with an orchestrator, and boom - you’ve solved it.

Except you haven’t. You’ve created a Rube Goldberg machine. Every agent adds latency. Every coordination point is an opportunity for errors to compound. You’re burning a hundred tokens behind the scenes for every token the player actually sees. And it’s slow. Interactive storytelling demands responsiveness. When a player makes a choice, they need to feel the story react. A multi-second delay while your agent swarm argues about what should happen next kills the magic faster than a bad plot twist.

It’s like using a battle rifle to shoot a mosquito during a quiet night. You can, technically. But should you?

The second option was to train our own model. This was the direction that made sense to me - the Skunk Works approach. Don’t rely on someone else’s general-purpose tool. Build the specialized thing yourself, designed from the ground up for the specific job you need done.

But that road had its own walls. We started in the early days, when the best open-source options were Alpaca and Vicuna - early Meta Llama derivatives that feel almost quaint now. We quickly learned that even with specialized datasets - and I’m talking about over 155,000 carefully curated and annotated novels and scripts - a standard Transformer architecture just predicting the most probable next token wasn’t going to develop narrative intelligence on its own. You can feed it Shakespeare’s complete works and it still won’t understand why Hamlet delays.

We explored the landscape. The dominant GPT-style architecture - autoregressive, left-to-right, always guessing the next token in sequence - was clearly insufficient for our needs. BERT-style architectures looked more promising at first. Masked language modeling, where the model learns to understand context from all directions rather than just predicting forward, felt closer to how narrative comprehension actually works. But BERT wasn’t designed for generation, and adapting it created its own set of headaches.

Neither approach was enough on its own. And we weren’t the only ones realizing that standard Transformers had limitations. Look at what NVIDIA did with Nemotron, or IBM with Granite - marrying Transformer architectures with Mamba (a state-space model) to get the best of both worlds. Different teams, different solutions, all arriving at the same conclusion: the vanilla Transformer isn’t the end of the road.

We went our own way. What we built isn’t a regular LLM. You won’t find it as a model card on Hugging Face. It’s a hybrid architecture born out of specific narrative requirements, not general-purpose benchmarks.

The third piece, and the one that changed everything, was understanding narrative itself. Not from an AI perspective - from a storytelling perspective. How does the Hero’s Journey actually work as a structural framework? Why did ancient playwrights divide stories into acts? What makes a player feel like a participant rather than a passenger?

We needed someone who understood storytelling at its bones. That someone was Casey McBeath, who became our Creative Director and who brought over a decade of LA-based cinematographer experience to the founding team. That’s not a decoration on his resume - it means Casey has spent years understanding how stories move audiences, how scenes are structured for emotional impact, how pacing and tension work not in theory but in practice, on real productions. You can teach an AI to recognize patterns in text. You can’t teach it taste. Casey brought the taste.

Blood, sweat, and GPT-2

So we combined all three approaches, because of course we did. (One project at a time was never really my style - greybeard speaking)

First iteration: experiments with GPT-2 combined with LSTM networks and a mutated architecture of our own design. We started training on our curated datasets. Small models, 13 billion parameters.

We failed.

But - and this is the part that doesn’t show up in the startup mythology - the second iteration of that approach showed promise. Not quality you’d ship. Not quality you’d show anyone except your co-founders at 3 AM when everyone’s sleep-deprived enough to see potential in garbage output. But the thesis was working. The architecture was doing something that standard approaches couldn’t: it was maintaining narrative awareness across longer sequences. It was, in its clumsy newborn way, trying to tell a story instead of just predicting words.

Let me be clear because I refuse to sell bullshit from any stage, virtual or otherwise: the output was bad. The quality was low. If you’d played it, you would have been unimpressed. But we could see the shape of what it could become, and that gave us wings.

Burning the boats

Long story short, I put everything I had into this project.

Everything.

And let me be specific about why this costs money, because people outside of AI sometimes don’t grasp the economics of what we were doing. Training models isn’t cheap - you’re renting expensive GPU clusters by the hour, and a single training run can eat through thousands of dollars before you know if it worked. Building proper datasets requires buying source material - those 155,000 curated texts didn’t materialize from thin air. You need to pay coders, because even a small Skunk Works team needs to eat while they’re building the impossible. And through all of this, you’re not drawing a salary from your own company. You’re the one throwing more money on the fire at every stage, not the one pulling it out.

Oh, and it’s generally helpful to not be homeless and to have something edible in the fridge. Basic requirements that become surprisingly non-trivial when your R&D budget is also your grocery budget.

Sold cryptocurrency. Took on more work, worked harder, earned more, and poured every extra zloty into keeping the research alive. Let go of the dream of building a home in Poland. And at one point - I’m not being dramatic here, this is just what happened - I sold my car to make it to the next month of compute costs.

Bootstrapping an AI company with heavy R&D requirements and no early customers to show traction is not the romantic adventure that startup Twitter would have you believe. There’s no “building in public” content strategy when your primary public activity is figuring out how to pay the cloud bill. There’s no “fail fast” when every failure costs you money you don’t have.

I needed to be sure we could pull this off before taking investor money. That might sound backwards to the “raise first, figure it out later” crowd, but this is a Skunk Works principle I’ve written about before: know your numbers. Know what you’re building. Know that the impossible thing is merely very difficult before you ask someone else to bet on it with you.

Those were hard days. Anyone who tells you bootstrapping is about “enjoying the journey” has never sold their car to fund a training run.

The breakthrough

And then, after a while, we pulled it off.

I won’t go deep on the technical architecture here - that essay deserves its own dedicated space, and I promise it’s coming. But the short version is this: our Narrative Intelligence Architecture (NIA, because every good project needs a name that sounds like a person) combines our custom-trained model with a deep understanding of narrative structure. It doesn’t use an agent swarm. It doesn’t burn hundreds of tokens in hidden coordination. It’s an orchestration system built on zero-agent approaches - one model, carefully tuned, doing the work that others try to accomplish with sprawling multi-agent infrastructure.

The result is faster, more coherent, and cheaper to run than the alternative. Not because we’re smarter than everyone else. Because we asked a different question. Instead of “how do we make LLMs better at storytelling?” we asked “how do we build a system that understands storytelling and uses LLMs as one component?”

Everyone in AI right now is racing toward more agents, better IQ, higher benchmark scores, more parameters, more data. The arms race is about making models smarter.

We made a bet that the blue ocean wasn’t IQ. It was EQ - Emotional Intelligence.

Not another state-of-the-art super-large LLM with an IQ higher than mine and better coding skills than I’ll ever have. The world has enough of those, and more are coming every quarter. What the world doesn’t have is AI that understands emotional dynamics, narrative structure, the human experience of being inside a story.

That’s what we built. That’s what you’ll get to try next week.

Standing at the edge

So here I am. Standing in front of you, just before the big shot toward the moon.

The demo will be free. One story, accessible to everyone. It’s a test for everything: the product, the technology, the narrative design, the infrastructure, the storytellers, the coders. Everything and everyone.

Will things break? Almost certainly. Will someone find an edge case that makes me want to hide under my desk? Probably before lunch on day one.

But that’s why we’re launching. Not because it’s perfect - it’s not, and anyone who ships a perfect v1 is either lying or hasn’t shipped. We’re launching because the only way to know if this works is to put it in front of real people and watch what happens. Ship and iterate. Kelly Johnson would approve.

I strongly believe we’ve built something unique. Not unique in the “our landing page says we’re unique” way, but unique in the “try it and tell me you’ve experienced this before” way. The UVP isn’t a marketing claim. It’s a feeling you’ll get when the story surprises you in a way that feels earned, not random.

Come play. Break things. Tell me what worked and what didn’t. That’s how we get better.

Less talking, more building. See you on the other side of launch.

Max

PS. In other news: I got married! Justine and I made it official, and it’s been awesome. Though honestly? For us, basically nothing changed except we have rings now. We loved each other before the ceremony, we love each other after. The best kind of non-event.

PS2. Here’s something I’ve been sitting on: NIA - our narrative intelligence system - is currently being used by two companies from completely different industries than gaming. Turns out the need for high Emotional Intelligence in AI isn’t limited to interactive storytelling. We might have built something bigger than we originally aimed for. Are we going to kill OpenAI or Anthropic? Definitely not. Are we going to succeed? I believe so, with everything I’ve got.

PS3. Yeah, I know. Three PS sections. My ADHD has entered the chat. But I wanted to say - if you’re reading this and you’re a pen-and-paper RPG player, a Game Master, a storyteller of any kind: come try this. You’ll understand what we’re doing faster than anyone, because you’ve been doing it manually your whole life. We just taught a machine to join your table.

I remember that day and that conversation!